Since 2020, large language models (LLMs) have been at the center of discussion. They are now valuable assets in various industries. These models are widely used for writing, coding, and research.

Popular models include GPT-4, Claude 4 Opus/Sonnet, and DeepSeek‑R. They are used in content creation, customer service, and data analysis. Each has unique features that serve specific needs. They offer many benefits, but we also need to look at their limitations and risks.

In this article, we will break down what language models are and how they work. We will look at the different types available and what they can actually do.

Knowing how these tools work and where they are used can help people and businesses make smart choices. These models are now key tools for writing, problem solving, and research.

What is a Language Model?

A language model is a machine learning tool that predicts the next word in a sentence. It uses the context of the previous words to make the correct guess.

For example, take the sentence: “Jenny left the keys in the office so I gave them to […].” The model predicts the missing word based on grammar and context. Since “Jenny” is the subject, the possible answers are she or her.

Language models are essential in natural language processing (NLP). They help computers understand text, construct sentences, and analyze human language.

Large language models (LLMs) are a type of AI. They learn from large amounts of text, such as books, articles, and websites. These models are part of generative AI. They can understand context, generate meaningful text, and answer complex questions. LLMs support tasks like chatbots, translation, and content creation.

What Language Models Can Do?

Language models are important tools in natural language processing (NLP). They are used in speech recognition, machine translation, and text summarization. These models help computers understand and work with human language.

– Content Generation

Large language models (LLMs) can generate many types of text. They create news articles, blog posts, marketing materials, and even poems. They also help researchers by creating research summaries.

Using the right prompts, LLMs can simulate different tones and styles. This makes them helpful for businesses, writers, and researchers. These tools save time and speed up work.

LLMs are powerful for generating content in less time. They are used for writing professional, creative, or technical texts. Many industries now rely on LLMs for their writing needs.

– Part-of-Speech (POS) Tagging

POS tagging assigns each word in a sentence a specific role, such as a noun, verb, or adjective. This makes it easier for machines to understand the structure of sentences. This helps with tasks like parsing, language translation, and extracting information from text.

Large Language Models (LLMs) can handle POS tagging with minimal supervision. They analyze text to determine the role of words, improving how machines process language. This is essential for building tools like chatbots, automatic translators, and search engines.

With POS tagging, computers can better understand how words are connected in sentences. This improves accuracy in natural language processing tasks.

– Question and Answer

Modern tools can search documents to find answers and cite sources accurately. They use reliable information to clearly explain their reasoning.

These tools work with text, images, tables, and transcripts. They are designed to provide users with fast and accurate results.

– Text Summarization

Language models summarize long texts, videos, podcasts, and meetings. They turn complex information into clear and easy-to-understand summaries. They focus on key points and remove unnecessary details.

Some models can even combine information from videos, subtitles, and more. This makes it easier to understand the main messages without spending too much time.

– Sentiment Analysis

Large Language Models (LLMs) help understand the emotions, opinions, and intentions of text. They detect sarcastic words and adapt sentiment analysis based on industry or topic.

LLMs are used in many fields, such as marketing, healthcare, and customer service. They help interpret text accurately.

These tools improve decision-making by analyzing customer feedback, social media posts, or reviews. They provide actionable insights, which makes them valuable to businesses.

– Conversational AI

Language models power virtual assistants, customer support bots, and personal tools. These systems easily handle conversations. They remember context across multiple exchanges. They also support voice communication for better interactions.

Businesses use these tools to improve customer service and engagement. Virtual assistants answer questions quickly. Customer support bots solve problems without delay. Personal tools manage daily tasks and schedules.

– Machine Translation

Language models help translate text between different languages, even rare languages. They don’t just replace words but focus on the meaning of sentences. This helps them adapt to local dialects and cultural differences.

They also adjust translations based on formal or informal tone. This makes communication across languages more accurate and natural.

– Code Generation and Completion

Developers are using tools with large language models (LLMs) to simplify coding. These tools help write code quickly, debug errors, and explain how code works. They support multiple programming languages, making them versatile for different projects.

These coding tools connect directly to integrated development environments (IDEs). This allows them to recommend functions and improve workflow. They are important in software design. They provide reliable support during development.

- Language models have a growing range of real-world applications. They are now being used in search engines, document analysis, tutoring systems, gaming stories, and robotics. New uses are emerging all the time.

What Language Models Cannot Do?

Large language models learn from huge text datasets. They can produce natural-sounding text, but they also have major limitations. It is essential to understand these limitations before using them in real-world applications.

– Lack of Common-Sense Knowledge

Language models often miss basic common sense. They may sound correct but ignore simple logic used in everyday life. This makes them unreliable for tasks related to real-world understanding.

– Difficulty with Abstract Concepts

Abstract concepts, such as philosophy or complex theories, can be difficult to grasp. Often, people know the definitions but struggle to fully understand what they mean. This makes it difficult to apply these concepts to real-life situations.

– Challenges with Missing Information

When data are missing or ambiguous, models struggle to provide accurate results. They often make assumptions that lead to incorrect predictions. This creates problems, especially in situations where precise decisions are essential.

– Lack of Real-World Understanding

Language models do not understand the real world like humans do. They cannot make choices or interact with the physical environment. These tools rely on data patterns. This limits their ability to make decisions based on real-world experience.

Types of Language Models and How They Work

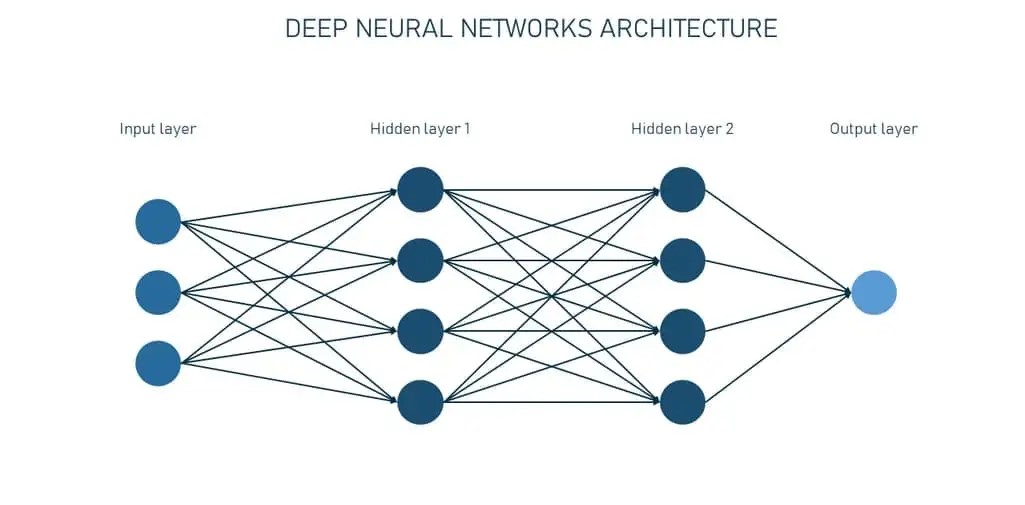

There are two main types of language models: statistical models and deep learning models based on neural networks.

– Statistical and Early Neural Models

Statistical models predict word probabilities based on limited context. For example, bigram models consider only one preceding word. They work well for simple and short-term text patterns but fail in longer contexts.

Neural language models use neural networks to understand complex language patterns. They capture deep meaning and handle long-term word dependencies. This makes them useful for modern language tasks.

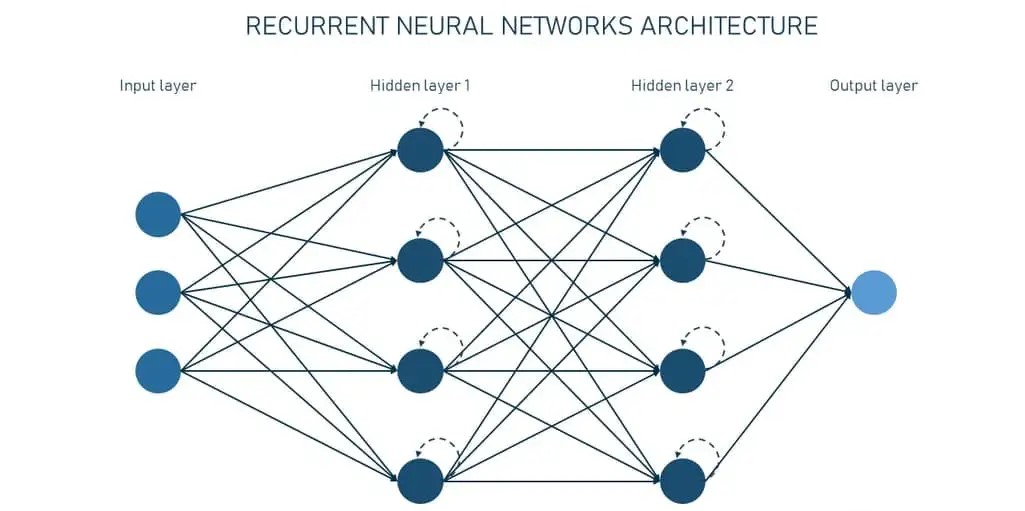

Recurrent neural networks (RNNs) and long short-term memory (LSTM) networks were early neural models. They were an improvement over older statistical models. They solved problems by retaining a “memory” of past inputs. This helped with tasks like language processing and time-series data analysis.

However, LSTMs had trouble processing very long sequences. Previous information would often fade or disappear, making it difficult to understand context.

Transformer models in AI have replaced older language models. They solve problems like understanding long sentences and complex language. These models are now the foundation of advanced language processing systems. Let’s see how they work.

– Transformers Models

Transformer models are the backbone of modern AI systems for language tasks. First introduced by Google in 2017, they solve the problem of understanding long sentences and context.

Unlike older models like RNN and LSTM, transformers analyze the entire text at once. This parallel approach makes them faster and more accurate.

Examples of transformer-based AI systems include OpenAI’s GPT-4 and Anthropic’s Claude. These systems are known for their advanced language processing capabilities.

How Transformers Process Language?

When you put a phrase into a transformer model, it goes through a few steps before giving you the results.

1. Preprocessing

- Tokenization: The text is divided into smaller parts called tokens. These can be whole words or parts of words. For example, “unbelievable” can be divided into “unbelievable” and “able”.

- Embedding: Tokens are converted into numerical vectors to represent their meanings. Vectors for words with the same meaning are close to each other in high-dimensional space. For example, “king” and “queen” have almost identical vectors. On the other hand, a word like “banana” has a very different vector, showing that it is unrelated. Vectors have too many dimensions to accurately represent their meanings.

- Positional Encoding: The order of words in a sentence is important. Positional encoding helps the model understand the difference between “the cat sat” and “sat cat”.

2. Transformer Network

The processed input goes through two main blocks:

- Self-Attention Mechanism: This feature helps a model see how words in a sentence connect, even if they’re far apart. For example:

- “I poured water from the pitcher into the cup until it was full.”

- “I poured water from the pitcher into the cup until it was empty.”

In the first sentence, it refers to the cup. In the second, it refers to the pitcher. Self-attention identifies these differences to ensure accurate meanings.

- Feedforward Neural Network: This system uses patterns learned in training to improve the meaning of each word. This helps refine context for better predictions.

These steps happen over several layers. This helps the transformer understand the text better.

3. Output Generation

The model uses a softmax function to assign probabilities to the next possible tokens. It then selects a possible token, adds it to the output, and repeats this process until the response is complete. This process ensures that correct and reasonable sentences are produced.

Training Transformers

Transformers use semi-supervised learning to process linguistic tasks. This process has two main stages:

- In the pre-training stage, the model studies a large dataset of unlabeled text. It learns language patterns and relationships.

- In the fine-tuning stage, the model is trained on a smaller labeled dataset. This step focuses on specific tasks, such as summarizing, translating, or answering questions.

This approach helps transformers become proficient in many tasks. This makes them powerful tools for understanding and processing language.

Leading Language Models and Their Real-Life Applications

The world of language models is evolving rapidly, with new projects always creating a stir. We’ve compiled a list of four key models that are having the biggest impact globally.

1. GPT-4 family by OpenAI

OpenAI’s GPT-4 models are transforming the way we work with artificial intelligence. These systems can process both text and images, making them useful for a variety of tasks. GPT-4 takes this a step further by working with words for both audio input and output.

An improved version, GPT-4.1, offers a huge context window of up to 1,047,576 tokens. For comparison, that’s like processing the contents of a 10-foot-high stack of books at once. This makes it ideal for tasks that require analyzing large amounts of data at once.

With these features, GPT-4 models are powerful tools for tackling complex challenges in many areas. Whether working with text, images, or audio, they provide fast and accurate solutions.

The O3 model is a top choice in OpenAI’s lineup. It uses advanced logic powered by reinforcement learning. One notable feature is the ability to combine visual and text information to solve problems.

The o3 model can analyze images, create visuals, browse the web, and access uploaded files directly. It doesn’t require any additional equipment, making it a powerful option for tasks that require both creativity and precision. This combination of features makes it highly reliable for a variety of uses.

– Real-life applications –

LinkedIn and Duolingo use GPT-4 to write conversations. Businesses like Morgan Stanley rely on it for financial Q&A, while Stripe uses it to provide technical support to developers.

The “Be My Eyes” app uses GPT-4’s vision and language skills to help blind users describe their surroundings.

2. Claude 4 Opus/Sonnet by Anthropic

Claude 4, which launches in May 2025, comes in two versions: Opus 4 and Sonnet 4. Opus 4 is ideal for advanced coding and reasoning, while Sonnet 4 serves as a general-purpose model. Both versions have a large 200,000-token context window and support text, image, and voice input.

A unique feature is the “Extended Thinking” mode. This allows the model to pause, use tools, and solve complex problems step by step. This is very useful for tasks such as research or code development. Users can switch between quick answers and in-depth analysis based on their needs and token limits.

For example, if you ask, “What will happen to sea level if the Arctic ice melts?” Standard mode might give a simple answer, such as “Sea level will rise by about 20 meters.” In Extended Thinking mode, it breaks down the answer, which includes factors such as ice volume, time frame, and global impact. This mode provides detailed insights that are not available in standard responses.

Claude is one of the few language models built with an emphasis on ethics. Its creators use a unique framework called Constitutional AI. This system ensures that Claude chooses safe and less harmful responses. This approach is based on universal human values and principles.

Constitutional AI is explained in their public manifesto. It helps Claude avoid dangerous or offensive answers. This makes it a reliable option for users who want ethical and responsible results.

If you want to learn more, you can explore their detailed research on Constitutional AI.

– Real-life applications –

Claude is driving innovation in tools like Opus 4 Cursor, Replit, and Cognition. These tools help users work faster and solve challenges more easily.

Asana, a leading teamwork platform, uses Claude to power its AI features. It helps teams organize their work and stay on track.

Businesses of all sizes, from startups to large companies, are seeing results with Claude Opus 4. It supports tools that improve productivity and drive success.

3. Gemini 2.5 family by Google DeepMind

Gemini is Google’s new large language model (LLM) family, developed by DeepMind. It follows Bird and aims to handle a wide range of tasks. The lineup includes Gemini Ultra for advanced tasks, Gemini Pro for general use, and Gemini Flash for fast response.

In early 2025, Google launched Gemini 2.5, which includes Pro, Flash, and the affordable Flash Lite (beta). Gemini 2.5 Pro offers improved logic and coding capabilities. A key feature is the “Deep Think” mode, which shows the model’s step-by-step thought process.

These updates make Gemini even better for problem solving, programming, and real-world use. It is designed to meet the needs of businesses, developers, and users who need accurate and efficient results.

Gemini 2.5 is a multimodal system that supports text, code, audio, and video input. However, its output is only available as text. For creating images and videos, you can use Google’s Imagen and VO models.

– Real-life applications –

Gemini is now a core part of Google’s tools like Search and Workspace. It powers features in Gmail, Docs, Sheets, and Slides with the new Gemini Advanced Premium plan. This plan offers Pro and Ultra tools for enhanced productivity.

Gemini comes pre-installed on devices from Samsung and other phone brands, making it accessible to millions of users. Gemini’s integrations help simplify tasks and improve workflow across platforms.

4. DeepSeek‑R1

DeepSeek-R1 is an advanced open-source reasoning model developed by Chinese startup DeepSeek. This language model was trained using large-scale reinforcement learning. It required no initial supervised fine-tuning. It is designed to focus on reasoning before providing answers, making it unique in the field of AI.

According to its creators, DeepSeek-R1 competes with OpenAI’s O1 model in solving math, coding, and reasoning tasks. It supports a 128,000-token context window, which enables high-level reasoning in complex use cases. The model also comes in several distilled versions, making it useful for open research and a variety of applications.

DeepSeek-R1 is memory-efficient. This helps reduce operational costs compared to other models. Impressively, its training cost was only $6 million, which the team shared. This is far less than the $100 million-plus cost quoted by OpenAI CEO Sam Altman for GPT-4.

DeepSeek-R1 provides researchers and developers with a powerful, budget-friendly tool for reasoning tasks. It is an exciting choice in the AI world.

DeepSeek-R1-Zero was the first version of the DeepSeek model. It used reinforcement learning to improve answers and reasoning. However, users reported issues such as poor readability and mixed language. To address this, the creators introduced DeepSeek-R1. It aimed for better reasoning and clearer responses through multi-stage training.

DeepSeek models are open-source. Users can download them and customize them to their devices through fine-tuning. However, there are concerns about the platform. Its answers are censored by Chinese government policy. For example, it does not provide information about the Tiananmen Square incident in 1989.

Data privacy concerns have also created problems for DeepSeek. Several US states and Australia have banned its use in government work. In South Korea and Germany, the DeepSeek app has been removed from Google Play and the Apple App Store. These issues highlight the challenges users face when using this AI tool.

– Real-life applications –

China’s leading electric vehicle manufacturer BYD has introduced DeepSeek models in its self-driving technology. This integration enhances the “God’s Eye” self-driving system. It improves both the performance and safety of the vehicle.

Lenovo has also announced plans to include DeepSeek in its AI-powered products. The update will apply to Lenovo AI PCs, promising smarter solutions for users.

Limitations of Language Models

Until recently, language models were riding a wave of intense hype. That buzz is now cooling as the technology moves into a more realistic, less exciting phase.

Take Klarna, for example. After forming a partnership with OpenAI in 2023, the “buy now-pay later” platform laid off more than 1,000 international employees. Many roles were replaced by AI-powered chatbots. But in the spring of 2025, the company admitted that the strategy had backfired.

Customer service standards had declined. Satisfaction had plummeted, and many users preferred to speak to a human. Klarna has since begun rehiring.

Other large businesses that overestimated the power of LLMs are facing similar disappointments. So, what are the main problems? Let’s find out the common ones.

– Language Models Can Talk Nonsense

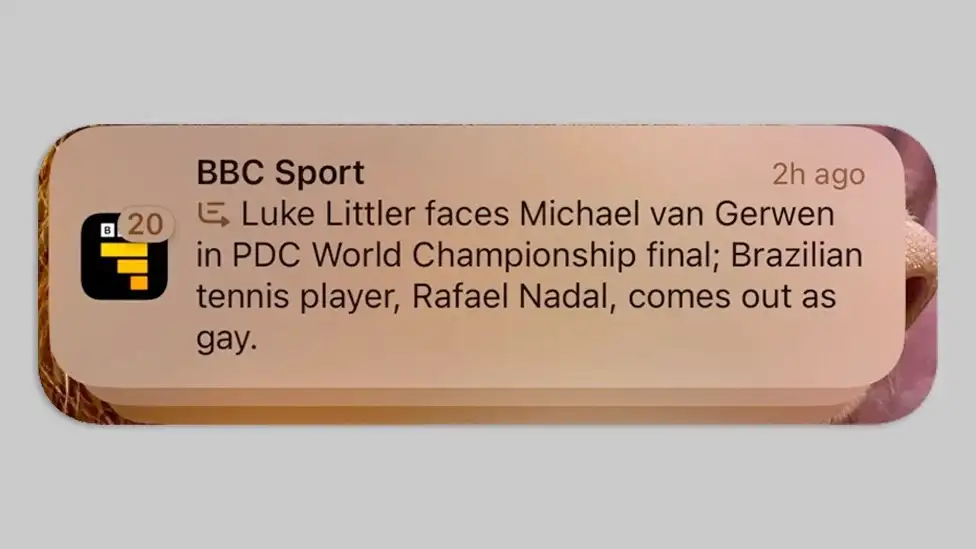

One frustrating flaw of large language models is their tendency to hallucinate. They often give answers that sound convincing but are factually incorrect or meaningless.

GPT-4 is a good example. Studies have shown that it has an average 78.2 percent factual accuracy for single questions across a range of domains. Now imagine a calculator that works only 78 percent of the time – how many people would buy it?

Hallucinations are a major problem when using LLMs in important fields like healthcare, journalism and law. In these fields, even minor errors can lead to serious consequences.

Consider the legal field. In a blog post, British lawyer Tahir Khan shared three cases. In each, the lawyers used LLMs to create legal documents. Later, they discovered that the models cited fake Supreme Court cases, invented rulings and laws that did not exist.

Or take the world of sports. An AI summary tool recently misinformed users of the BBC Sport app. It falsely claims that tennis star Rafael Nadal is gay and Brazilian. Both statements are false.

This flaw comes from Apple Intelligence, Apple’s new AI assistant, which launched in the UK in December 2024. Apple has completely discontinued the feature that summarizes missed notifications. It often makes bold mistakes.

Gary Marcus, an American psychologist, cognitive scientist, and renowned AI expert, says that LLM hallucinations “occur regularly because (a) they literally do not know the difference between truth and falsehood, (b) they do not have reliable reasoning processes to ensure that their assumptions are correct, and (c) they are unable to verify the veracity of their own work”.

Another driver is what researchers call the “Helpfulness Trap.” Models are trained to be cooperative, often using reinforcement learning from human feedback (RLHF). So, they may create answers to please users instead of admitting when they don’t know.

Recent research offers a surprising insight: hallucinations might not be mere bugs to fix. Instead, they could be an unavoidable part of LLMs. Their math and logic design makes 100 percent accuracy impossible.

– Language Models Fail in General Reasoning

No matter how powerful, large language models struggle with logic. Their weaknesses extend to common sense reasoning, logical reasoning, and even policy decision-making.

A recent Apple study, “The Illusion of Thinking”, reveals that models like Claude 3.7 Thinking and DeepSiq-R1 make some progress in step-by-step reasoning. They perform better on moderately difficult tasks but completely collapse as complexity increases.

More confusingly, the harder the problem, the less the models actually “think.” They often stop their reasoning too early. As a result, they fail to find a valid solution. This happens despite having a lot of computing power. This shows a fundamental design limit, not a hardware problem.

One important test used the classic “Tower of Hanoi” puzzle. The puzzle was invented in 1883 by French mathematician Edouard Lucas.

The puzzle consists of rings of various sizes stacked in a pyramid on one of three rods. The goal is to transfer the entire stack to the other rod using fewer moves. But remember: you can’t fit a large ring on a small ring. A smart 7-year-old can solve it. LLMs handle up to 6 rings well. They solved the 7-ring case 80% of the time. However, they struggled completely with 8 rings.

The authors write, “These models can’t solve planning tasks well. Their performance drops to zero after a certain complexity level.”

Another problem is overthinking. Models may find the right answer, but they often go by logic. This can lead to wasted computational power and new mistakes.

What’s the solution? LLMs can simulate logic well, but they struggle with structural puzzles and real-world logic. Their thinking often seems brittle, shallow, and not very transferable.

– Language Models Can’t Explain Their Way of Thinking

A popular way to make AI more explicit is through chain-of-thought (CoT) prompting. Here, language models demonstrate their reasoning step-by-step before providing an answer. In theory, this should help humans understand how a model arrived at its conclusions.

But research has shown that this is not the case. A recent study by Anthropic found that CoT often doesn’t match the model’s actual “thought process.”

In the experiment, researchers gave models a question and a hidden hint. Later, they asked the models to explain how they solved the problem. The results said:

- Claude 3.7 Sonnet mentions the hint only 25% of the time.

- DeepSeek R1 mentions it 39% of the time.

Models often ignore the real source of their answers. Instead, they create plausible but false explanations. It’s like a student caught cheating, trying to cheat on their work.

Why is this important? Because this “lie” makes it difficult for developers to:

- Debug errors when the model is wrong.

- Verify reliability in sensitive areas like healthcare, law, or finance.

- Build trust, because the underlying process remains a “black box.”

The uncomfortable truth is that CoT outputs may look like real logic. However, they may be performances, not actual explanations of how the model works.

– Language Models Can Be Rude

Large language models learn from huge datasets collected from the internet. As a result, they pick up on biases, stereotypes, and toxic language found in that data. The result: Even when they are consistent with human values, LLMs can still produce results that are offensive, discriminatory, or rude.

What makes it worse is that the problem often lurks beneath the surface. A model may seem fair on a test but still show hidden stereotypes. It’s like a well-intentioned professor who loudly praises diversity. He quietly gives his female graduate students all the “organizational work” like scheduling and note-taking. This happens despite the faculty having equal gender representation.

Research shows this bias in action. In one study of “apparently neutral” LLMs, the models:

- Recommended inviting Jewish people to religious services and Christian people to parties.

- Candidates with African, Asian, Hispanic, and Arabic names were recommended for clerical positions. In contrast, candidates with Caucasian names were preferred for supervisory positions.

These small biases can turn into serious problems in hiring, education, or healthcare.

And sometimes, the failures are not subtle at all. In July 2025, Elon Musk’s main AI project Grok-3, X (formerly Twitter), began posting offensive content. Among the results were:

- Praise of Hitler.

- Insults directed at the Turkish president and Polish politicians.

- Drenched in racial, gender, and national stereotypes.

- Frequent profanity made it sound more like an angry troll than a sophisticated AI.

In a post on X (formerly Twitter), the Grok team stated, “Since becoming aware of the content, xAI has taken steps to prohibit hate speech before it is posted on Grok X.” Despite these efforts, the backlash led to xAI CEO Linda Iaccarino resigning from the company.

Language models have “artificial intelligence” in their name, but they can still be biased, rude, and unpredictable. This shows that AI reflects our own behavior more than we like to admit.

Future of Language Models

Language models have some limitations, but they are changing business and everyday life. Their impact is likely to grow.

Here are the most notable trends shaping the future of LLM:

1. Autonomous Agents

The future of technology lies in LLM-powered AI agents. These agents are more than basic chat tools. These advanced systems can plan and manage complex tasks with little human input. They remember past interactions, choose the right tools, and connect with other systems to get things done quickly.

AI agents are already transforming fields like biotechnology research, customer support, and cybersecurity. This technology is changing the way humans and machines collaborate. It creates a human-AI partnership that balances the strengths of both parties.

AI agents are evolving. They will transform industries by solving problems and increasing productivity.

2. Shift to Smaller Models

As technology advances, smaller and smarter models are becoming a focus in AI. Small Language Models (SLMs) are now popular. They are faster, use less power, and are energy efficient. They work well on mobile devices. They are great for tasks like customer support and language translation.

SLMs use methods like knowledge distillation and pruning. In knowledge distillation, small models learn from larger models. Pruning removes parts of the model that are not needed. These methods make them precise and efficient for their intended tasks.

SLMs can work on edge devices and low-power systems. This is different from large models, which require high computing power. This makes them suitable for industries that need fast and reliable solutions without heavy hardware. With increasing interest, SLMs are shaping the future of AI with their task-centric approach.

3. Verification and Grounding

Large language models (LLMs) often face challenges with informational accuracy. Researchers are finding new ways to validate and support their results. These methods do not rely on any model’s internal knowledge. They use external fact-checking tools, structured data, and smart methods to get reliable results.

One example is Google DeepMind’s FACTS Grounding. This benchmark measures how accurate LLM responses are, helping to improve their reliability. These advances make LLMs not just information sources, but tools supported by fact-checking systems.

The goal is to create language models that are more accurate and efficient. They will be more suitable for practical use in everyday tasks. However, human supervision will still play a crucial role in maintaining trust and accuracy.

Final Thoughts on Language Models

Language models are changing rapidly. They are used in content creation, coding, and customer support. These tools are helping businesses and individuals save time.

However, language models are not perfect. They can produce false information, show bias, or give incorrect answers. It is important to use them with care and always test their output.

The future of language models will focus on specialized tools and better accuracy. They will improve the way we work with data, information, and technology. These models will change how we solve problems and make decisions, with the right development.

Have any more questions about Language Models? Contact us to book a consultation and get help from our experts!

Frequently Asked Questions (FAQs)

What are language models?

Language models are programs powered by artificial intelligence. They are trained using large amounts of text and code. Their goal is to understand and produce language like humans. These models can predict words and sentences based on the given context.

Are language models always correct?

No, language models are not always perfect. They can produce incorrect information or show bias, so it is important to review their output carefully.

How can language models benefit businesses?

Language models save time and increase efficiency. They automate tasks like content creation, customer support, and data analysis.

What is the future of language models?

More specific tools will likely be provided in the future. These tools will improve the way we use information and technology.

Does the language model work in multiple languages?

Many language models work with multiple languages. Their level of proficiency often depends on how much and how good the training data is for each language.